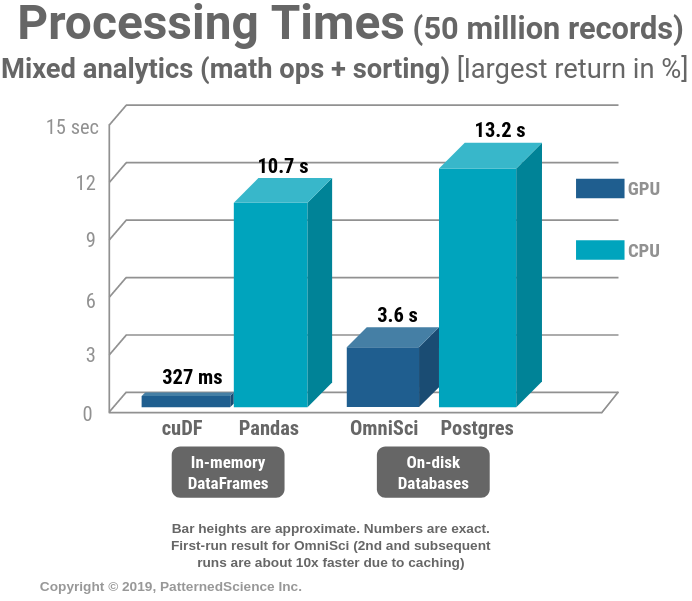

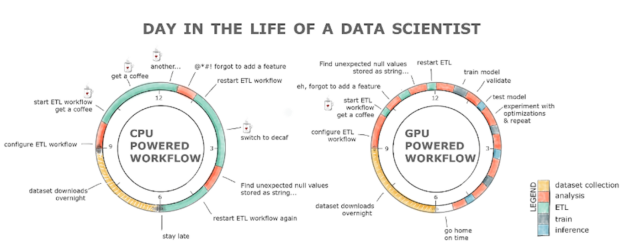

Talk/Demo: Supercharging Analytics with GPUs: OmniSci/cuDF vs Postgres/ Pandas/PDAL | Masood Khosroshahy (Krohy) — Senior Solution Architect (AI & Big Data)

Panda RGB GPU Backplate Custom Made for ANY Graphics Card Model now with Vent Cut Outs and ARGB (Addressable LEDs) - V1 Tech

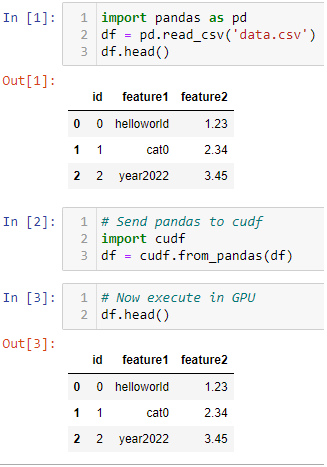

Gilberto Titericz Jr on Twitter: "Want to speedup Pandas DataFrame operations? Let me share one of my Kaggle tricks for fast experimentation. Just convert it to cudf and execute it in GPU

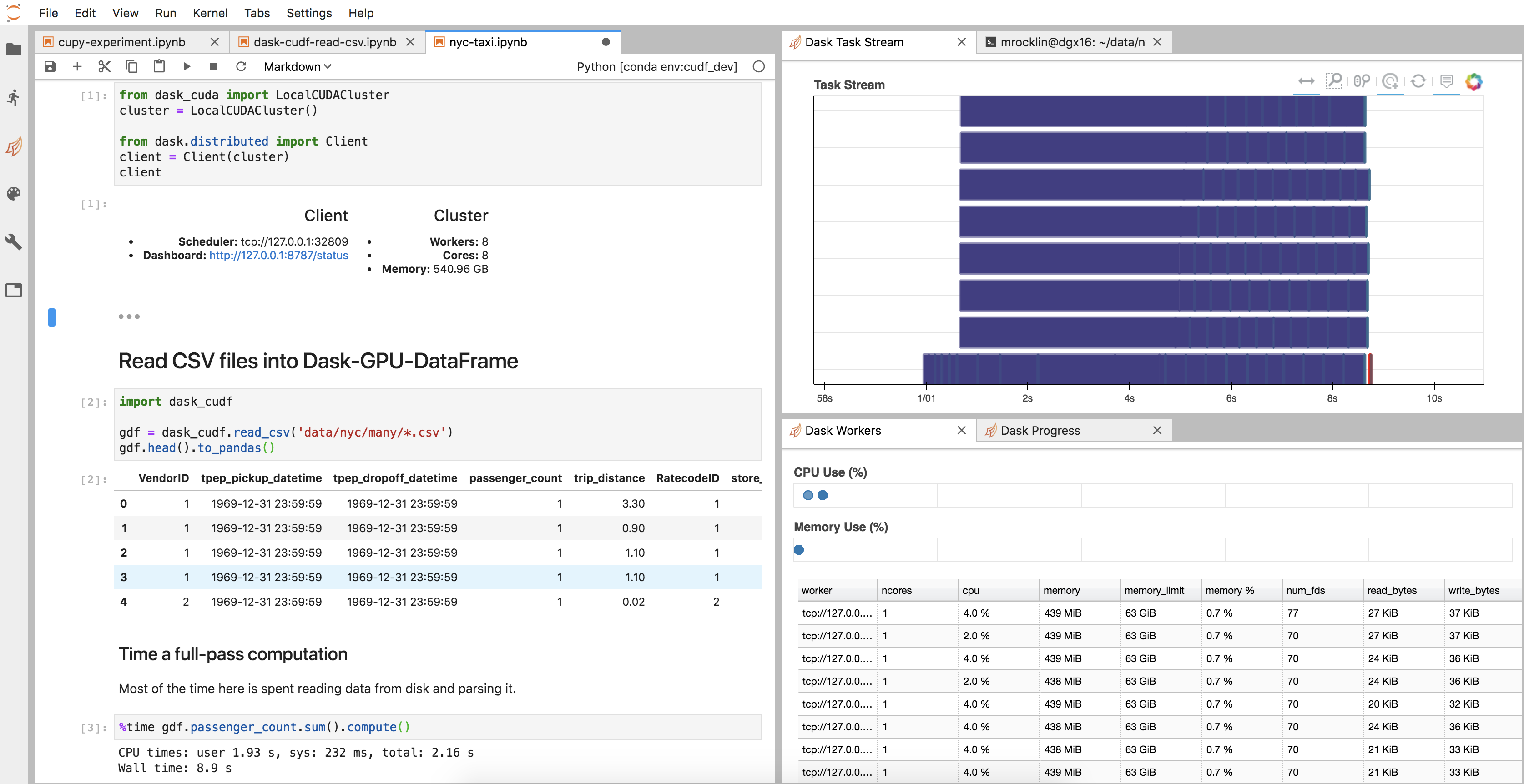

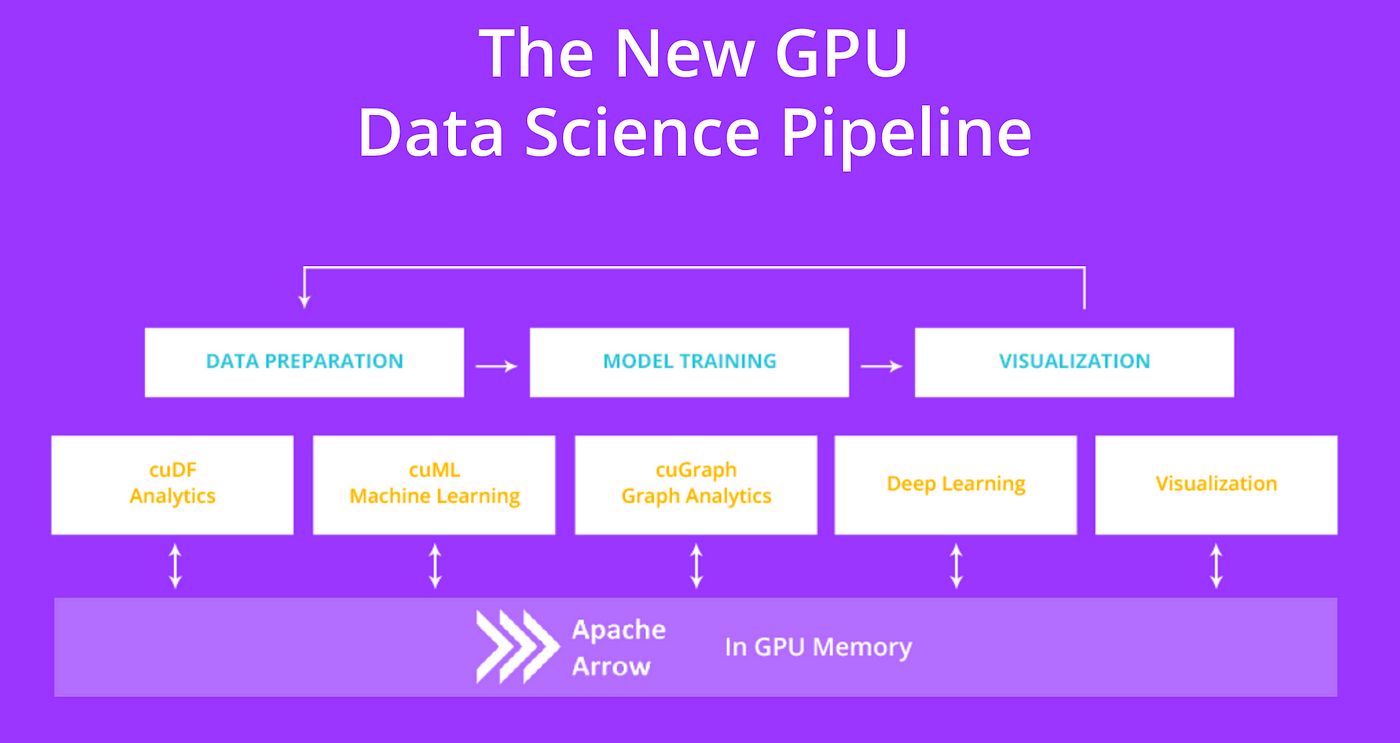

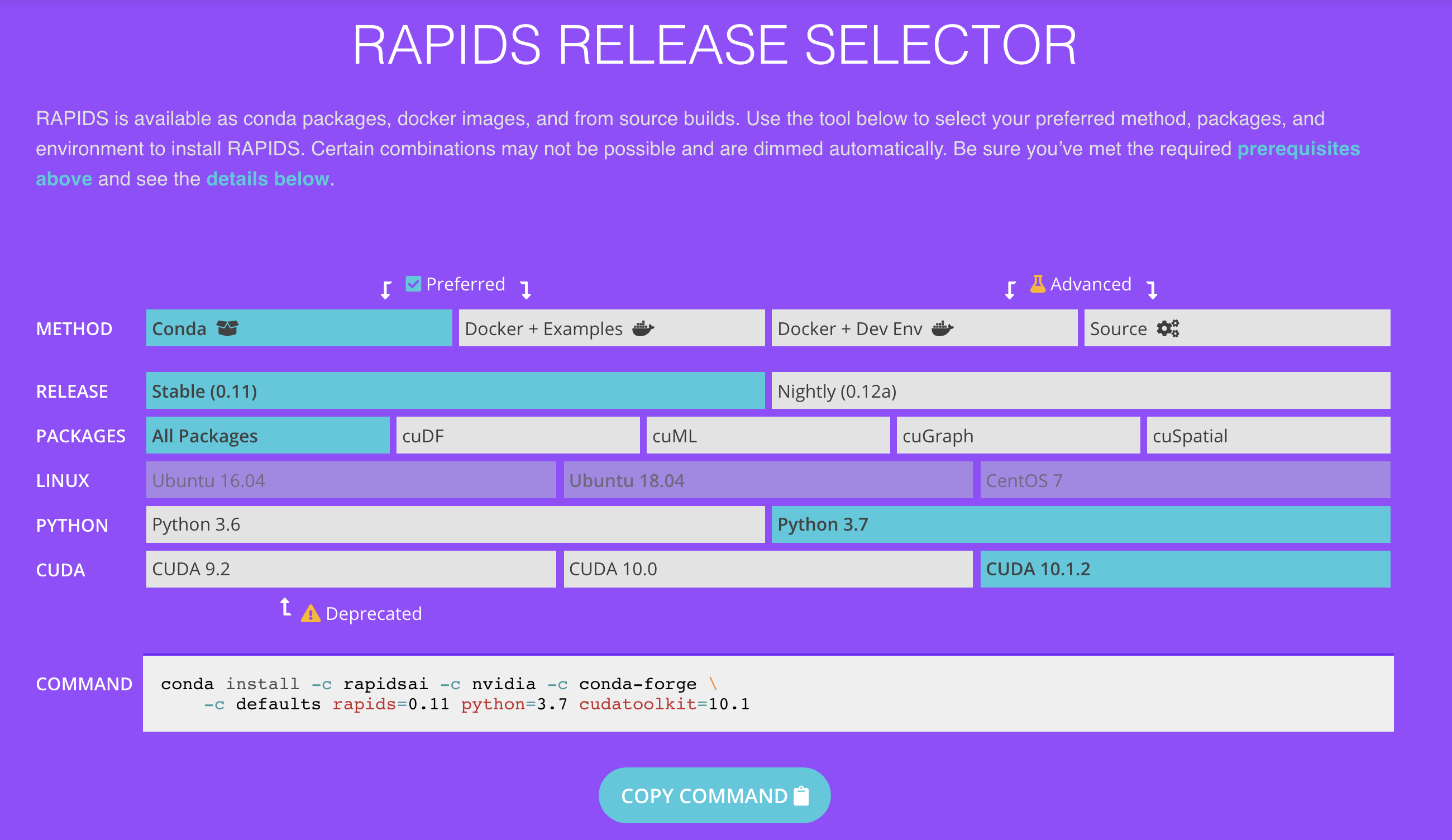

Python Pandas Tutorial – Beginner's Guide to GPU Accelerated DataFrames for Pandas Users | NVIDIA Technical Blog

Pandas DataFrame Tutorial - Beginner's Guide to GPU Accelerated DataFrames in Python | NVIDIA Technical Blog